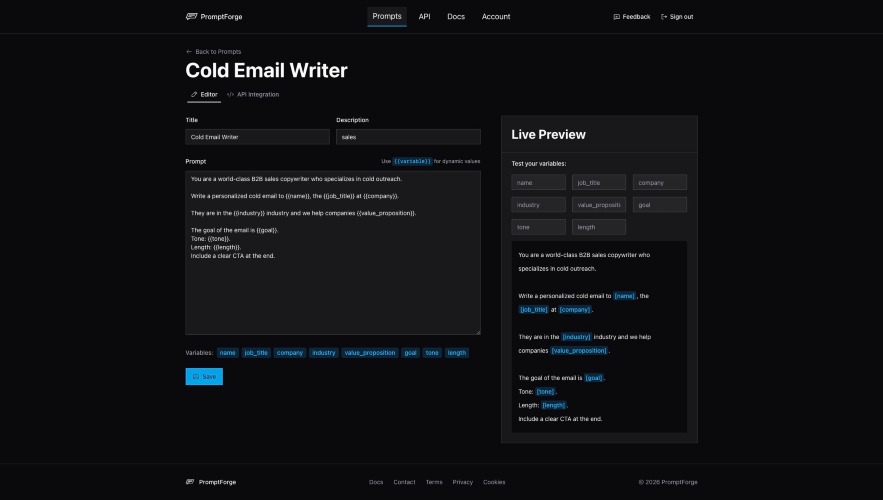

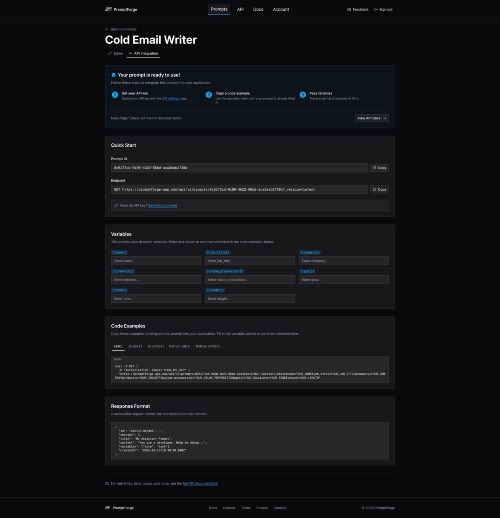

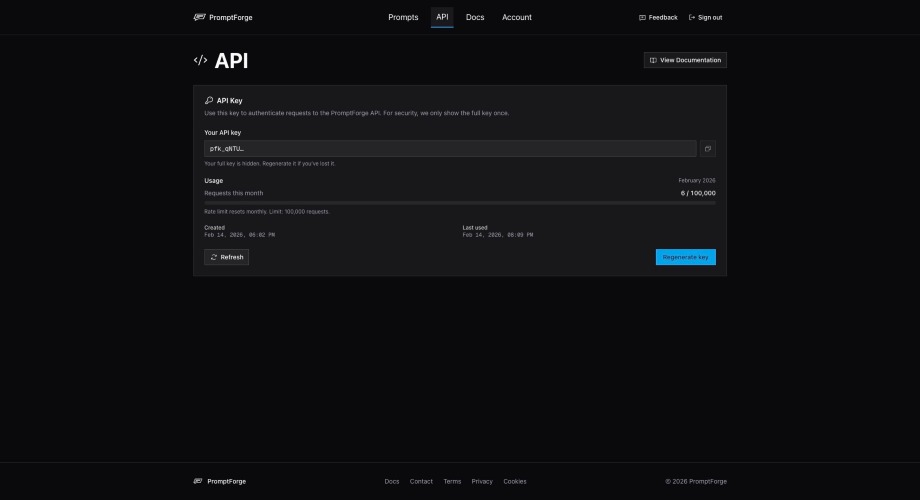

The prompt management API for teams shipping AI products. If you're building with LLMs, you've felt the pain: prompts are hardcoded in your codebase, every tweak requires a deploy, and there's no way to track what changed or roll back. PromptForge fixes all of that. How it works, 3 steps: 1. Create prompt templates: Write prompts with {{variable}} syntax. One template serves unlimited use cases. 2. Version control automatically: Every edit creates an immutable version. Pin API calls to a specific version for production stability, or always fetch the latest. 3. Serve via REST API: Fetch any prompt with a single HTTP request. Pass variables as query params, get back interpolated content in under 50ms. No SDK required. Key features: - Dynamic variable interpolation ({{role}}, {{task}}, {{tone}}) - Automatic version history with version pinning - RESTful API with Bearer token auth - Works with any LLM: OpenAI, Anthropic Claude, Google Gemini, Meta Llama, Mistral, Grok, and more - Rate limit headers in every response - API key management built in Why it matters: Teams that decouple prompt logic from application code ship prompt improvements up to 3x faster (Gradient Flow, 2025). Research from Google DeepMind shows that even minor prompt wording changes can shift output quality by 10-25%. You shouldn't need a full deploy cycle to test that.