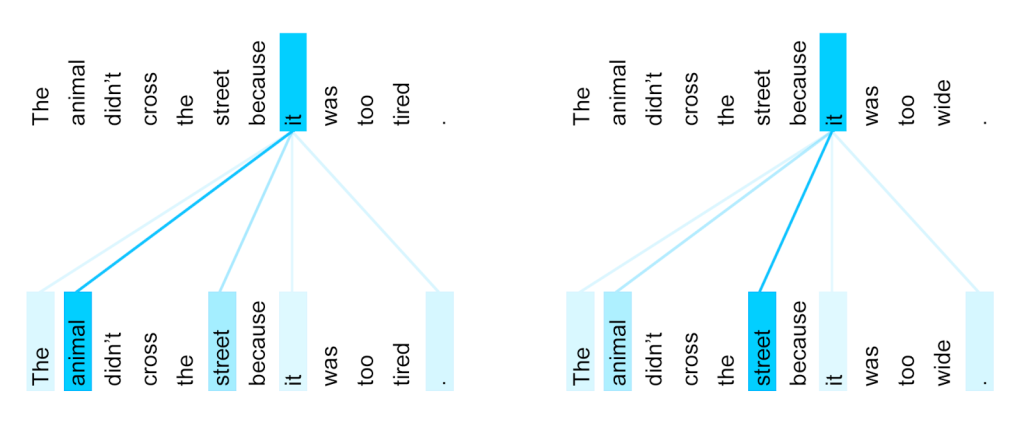

Google’s solution is what’s called an attention mechanism, built into a system it calls Transformer. It compares each word to every other word in the sentence to see if any of them will affect one another in some key way — to see whether “he” or “she” is speaking, for instance, or whether a word like “bank” is meant in a particular way. When the translated sentence is being constructed, the attention mechanism compares each word as it is appended to every other one. This gif illustrates the whole process. Well, kind of.

Google’s Transformer

A Novel Neural Network Architecture for Language Understanding

Published At