Artificial Intelligence Services Development: How to Build AI That Works in Production

The real question behind artificial intelligence services development is simple: how do you ship an AI feature that stays useful after the demo – accurate enough, fast enough, safe enough, and measurable in business terms. In 2026, that usually means combining an LLM with your data, adding evaluation and monitoring from day one, and treating “AI” like a product with owners, budgets, and reliability targets.

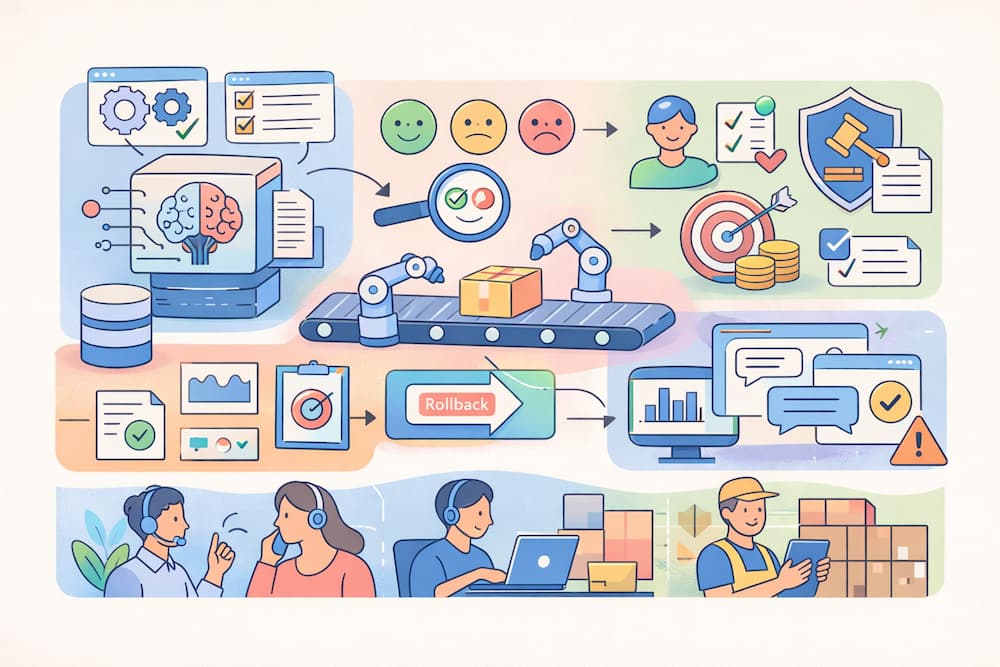

This is what separates AI product development from prototypes: disciplined machine learning engineering, an MLOps pipeline, and AI governance and compliance that’s designed into the system.

A demo can look great and still fail in production. Shipping AI means the feature stays accurate, fast, safe, and measurable after real users arrive.

In 2026, production-grade AI product development usually combines:

- a capable LLM,

- your enterprise data,

- evaluation from day one,

- clear owners, budgets, and reliability targets.

It also needs machine learning engineering around the model – deployment discipline, an MLOps pipeline, and explicit checks for model monitoring and drift.

That’s the practical difference between experiments and enterprise AI solutions that teams can operate.

How to find trustworthy AI development services?

If you’re evaluating partners for AI integration services, generative AI development, or full custom AI development, filter for production discipline – not presentation polish.

Three questions that separate delivery teams from slide decks:

- “Show an evaluation report.”

Not accuracy claims – an actual eval set, how it’s maintained, and what happens when performance drops. - “What’s your rollback plan?”

If a model update spikes failure rate, can they revert behavior safely within hours? - “How do you handle governance?”

Ask how they map risks, log decisions, and design oversight – especially for AI agents / agentic workflows.

Also watch for teams that over-promise with buzzwords. By the way, it’s quite difficult to find reliable artificial intelligence development services. Strong AI product development teams will say: “We’ll start with RAG, measure, then decide if fine-tuning is worth it.” That’s a sign they expect to be judged on outcomes, not hype.

Artificial Intelligence Services Development starts with a measurable business case

Many AI initiatives stall because the team never commits to one outcome and a production definition of done. Write one sentence the business can track: “Reduce average handle time in support by 18% for Tier-1 tickets, without increasing escalations.”

Then attach two numbers:

- value per unit (for example, cost per minute of support time),

- an error budget (how many wrong answers you can tolerate before trust drops).

A quick calculator before you scope the build:

- monthly tickets: 30,000

- minutes saved per ticket (target): 0.6

- cost per support minute: €0.55

- expected monthly value: 30,000 × 0.6 × 0.55 = €9,900

With that, you can choose the right first release: RAG, a narrow workflow automation, or a tool-using agent.

Why this matters now: analyst forecasts point to AI moving into core systems. Gartner, for example, forecasts worldwide AI spending at $2.52T in 2026.

Architecture choices: RAG, LLM fine-tuning, and AI agents

When someone says “we need AI,” they usually need one of three delivery patterns used in AI development services and AI integration services. In more advanced implementations, particularly in enterprise systems, these often evolve into agentic AI development services where AI agents are designed to not only generate outputs but also take controlled actions within defined workflows.

| Pattern | Best when | Cost to plan for |

|---|---|---|

| RAG (retrieval-augmented generation) | answers must cite internal sources (policies, docs, catalogs) | indexing, chunking strategy, retrieval evaluation, citation coverage |

| LLM fine-tuning | outputs must match a strict format or domain language | dataset curation, retraining cadence, drift checks |

| AI agents / agentic workflows | the system must take actions (tickets, orders, updates) | permissions, audit logs, failure handling, safe rollback |

A practical rule for custom AI development: start with RAG unless you can show that fine-tuning pays back. Add agentic steps when tools and permissions are tightly scoped.

You can also use a “broken” form of the main phrase naturally: artificial intelligence for enterprise services development tends to move faster when the first release is narrow (one workflow, one team, one KPI), then expanded.

Industry expectations are shifting toward agentic features inside apps – Gartner forecasts 40% of enterprise applications will include task-specific AI agents by 2026.

Production reliability: evaluation, MLOps, and monitoring

This is where generative AI development turns into a system you can run.

The common mistake is manual prompt testing. The production fix is an MLOps pipeline with automated evaluation gates.

A minimum setup usually includes:

- an offline evaluation set (200–2,000 examples depending on risk),

- automated regression tests (prompts, retrieval, tools),

- telemetry: latency, cost, refusal rate, citation coverage,

- drift detection: shifts in queries, data freshness, and hallucination rates,

- data quality and evaluation checks for the knowledge base (duplicates, stale pages, missing sources).

Go-live checklist

- Define one KPI and one harm metric (for example, wrong refunds issued).

- Build a gold dataset (real queries, anonymized, labeled).

- Choose an architecture (RAG vs fine-tune vs agent) and document why.

- Add evaluation gates to CI (fail the build if quality drops).

- Implement fallbacks (search-only mode, human handoff, safe refusal).

- Set SLOs for latency and answer quality; share them internally.

- Review failure cases weekly and feed them into the next iteration.

If you already run SRE practices, borrow the error budget mindset: when reliability dips, you slow down changes until the system recovers.

A small monitoring table that keeps teams aligned:

| What to monitor | Example target | Why it matters |

|---|---|---|

| p95 latency | ≤ 2.5s | slow answers get ignored |

| groundedness / citation rate | ≥ 80% | tracks RAG quality and reduces hallucinations |

| escalation rate | ≤ baseline + 5% | prevents ops overload |

For delivery discipline, the DORA metrics map cleanly to AI release cycles: deploy frequency, lead time, change failure rate, and recovery time.

Governance and compliance: build it into the feature

If the system touches decisions, money, safety, or rights, AI governance and compliance must be part of the product scope.

A pragmatic baseline is the NIST AI Risk Management Framework, organized around Govern, Map, Measure, Manage.

In Europe, the EU AI Act also pushes organizations toward stronger operational habits: documentation, oversight, and logs – even when the system is not classified as high-risk.

A solid governance baseline for a first release:

- model and prompt versioning with change logs,

- clear human oversight points (when to block, when to review),

- least-privilege access to tools and data sources,

- red-team scenarios: jailbreaks, sensitive data leakage, risky actions,

- audit-ready logs (retrieved sources and tool calls).

How to choose a vendor without buying “demo output”

When you evaluate enterprise AI solutions providers, filter for production discipline.

Three questions that separate delivery teams from slide decks:

- “Show an evaluation report.” (dataset, methodology, pass/fail thresholds, maintenance plan)

- “What’s your rollback plan?” (hours, not weeks)

- “How do you handle governance for tool-using systems?” (risk mapping, logging, oversight)

Teams that ship tend to propose a simple sequence: start with RAG, measure, then decide whether LLM fine-tuning is worth the ongoing cost.

To use the primary phrase once more in italics and normal form: artificial intelligence services development succeeds when scope is narrow, evaluation is automated, and the system is built to handle messy inputs – not perfect demos.